Self-host GitHub Actions runners with Actions Runner Controller (ARC) on AWS

Self-host GitHub Actions runners with Actions Runner Controller (ARC) on AWS. Includes terraform code, and production ready configurations for `arc` and `karpenter`.

This post details setting up GitHub Actions Runners using ARC (Actions Runner Controller) on AWS using EKS. This includes terraform code for provisioning the infrastructure and a custom runner image for runners. It also includes optimizations for cost and performance using Karpenter for autoscaling and other best practices.

Setup

We setup Karpenter v1.0.2 and EKS using Terraform to provision the infrastructure. Complete setup code is available here: https://github.com/WarpBuilds/github-arc-setup

EKS Cluster Setup

The EKS cluster was provisioned using Terraform and runs on Kubernetes v1.30.

A key aspect of our setup was using a dedicated node group for essential add-ons, keeping them isolated from other workloads. The default-ng node group utilizes t3.xlarge instance types, with taints to ensure that only critical workloads, such as Networking, DNS management, Node management, ARC controllers etc. can be scheduled on these nodes.

module "eks" {

source = "terraform-aws-modules/eks/aws"

cluster_name = local.cluster_name

cluster_version = "1.30"

cluster_endpoint_public_access = true

cluster_addons = {

coredns = {}

eks-pod-identity-agent = {}

kube-proxy = {}

vpc-cni = {}

}

subnet_ids = var.private_subnet_ids

vpc_id = var.vpc_id

eks_managed_node_groups = {

default-ng = {

desired_capacity = 2

max_capacity = 5

min_capacity = 1

instance_types = ["t3.xlarge"]

subnet_ids = var.private_subnet_ids

taints = {

addons = {

key = "CriticalAddonsOnly"

value = "true"

effect = "NO_SCHEDULE"

}

}

}

}

node_security_group_tags = merge(local.tags, {

"karpenter.sh/discovery" = local.cluster_name

})

enable_cluster_creator_admin_permissions = true

tags = local.tags

}Private Subnets and NAT Gateway

The EKS nodes are in private subnets, allowing them to communicate with external resources through a NAT Gateway. This configuration ensures node connectivity without exposing them directly to external traffic.

Karpenter for Autoscaling

Karpenter provides fast and flexible autoscaling of the nodes to optimize cost and resource efficiency. We explore a few variations of configuration to reduce over-provisioning and unnecessary costs.

- Karpenter v1.0.2: We chose the latest version of karpenter at the time of writing.

- Amazon Linux 2023 (AL2023): The default NodeClass provisions nodes with AL2023, and each node is configured with 300GiB of EBS storage. This additional storage is crucial for workloads that require high disk usage, such as CI/CD runners, preventing out-of-disk errors commonly encountered with default node storage (17GiB). This needs to be increased based on the number of jobs expected to run on a node in parallel.

- Private Subnet Selection: The NodeClass is configured to use the private subnets created earlier. This ensures that nodes are spun up in a secure, isolated environment, consistent with the EKS cluster's network setup.

- m7a Node Families: Using the NodePool resource, we restricted node provisioning to the m7a instance family. These instances were chosen for their performance-to-cost efficiency and are only provisioned in the us-east-1a and us-east-1b Availability Zones.

- On-demand Instances: While Karpenter supports Spot Instances for cost savings, we opted for on-demand instances for an equivalent cost comparison.

- Consolidation Policy: We configured a 5-minute consolidation delay, preventing premature node terminations that could disrupt workflows. Karpenter will only consolidate nodes once they are underutilized for at least 5 minutes, ensuring stable operations during peak workloads.

module "karpenter" {

source = "terraform-aws-modules/eks/aws//modules/karpenter"

cluster_name = module.eks.cluster_name

enable_pod_identity = true

create_pod_identity_association = true

create_instance_profile = true

node_iam_role_additional_policies = {

AmazonSSMManagedInstanceCore = "arn:aws:iam::aws:policy/AmazonSSMManagedInstanceCore"

}

tags = local.tags

}

resource "helm_release" "karpenter-crd" {

namespace = "karpenter"

create_namespace = true

name = "karpenter-crd"

repository = "oci://public.ecr.aws/karpenter"

chart = "karpenter-crd"

version = "1.0.2"

wait = true

values = []

}

resource "helm_release" "karpenter" {

depends_on = [helm_release.karpenter-crd]

namespace = "karpenter"

create_namespace = true

name = "karpenter"

repository = "oci://public.ecr.aws/karpenter"

chart = "karpenter"

version = "1.0.2"

wait = true

skip_crds = true

values = [

<<-EOT

serviceAccount:

name: ${module.karpenter.service_account}

settings:

clusterName: ${module.eks.cluster_name}

clusterEndpoint: ${module.eks.cluster_endpoint}

EOT

]

}

resource "kubectl_manifest" "karpenter_node_class" {

yaml_body = <<-YAML

apiVersion: karpenter.k8s.aws/v1beta1

kind: EC2NodeClass

metadata:

name: default

spec:

amiFamily: AL2023

detailedMonitoring: true

blockDeviceMappings:

- deviceName: /dev/xvda

ebs:

volumeSize: 300Gi

volumeType: gp3

deleteOnTermination: true

iops: 5000

throughput: 500

instanceProfile: ${module.karpenter.instance_profile_name}

subnetSelectorTerms:

- tags:

karpenter.sh/discovery: ${module.eks.cluster_name}

securityGroupSelectorTerms:

- tags:

karpenter.sh/discovery: ${module.eks.cluster_name}

tags:

karpenter.sh/discovery: ${module.eks.cluster_name}

Project: arc-test-praj

YAML

depends_on = [

helm_release.karpenter,

helm_release.karpenter-crd

]

}

resource "kubectl_manifest" "karpenter_node_pool" {

yaml_body = <<-YAML

apiVersion: karpenter.sh/v1beta1

kind: NodePool

metadata:

name: default

spec:

template:

spec:

tags:

Project: arc-test-praj

nodeClassRef:

name: default

requirements:

- key: "karpenter.k8s.aws/instance-category"

operator: In

values: ["m"]

- key: "karpenter.k8s.aws/instance-family"

operator: In

values: ["m7a"]

- key: "karpenter.k8s.aws/instance-cpu"

operator: In

values: ["4", "8", "16", "32", "64"]

- key: "karpenter.k8s.aws/instance-generation"

operator: Gt

values: ["2"]

- key: "topology.kubernetes.io/zone"

operator: In

values: ["us-east-1a", "us-east-1b"]

- key: "kubernetes.io/arch"

operator: In

values: ["amd64"]

- key: "karpenter.sh/capacity-type"

operator: In

values: ["on-demand"]

limits:

cpu: 1000

disruption:

consolidationPolicy: WhenEmpty

consolidateAfter: 5m

YAML

depends_on = [

kubectl_manifest.karpenter_node_class

]

}Variant #2: We also ran another setup with a single job per node to compare the performance and cost implications of running multiple jobs on a single node.

- key: "karpenter.k8s.aws/instance-cpu"

- operator: In

- values: ["4", "8", "16", "32", "64"]

+ key: "karpenter.k8s.aws/instance-cpu"

+ operator: In

+ values: ["8"]Actions Runner Controller and Runner Scale Set

Once Karpenter was configured, we proceeded to set up the GitHub Actions Runner Controller (ARC) and the Runner Scale Set using Helm.

The ARC setup was deployed with Helm using the following command and values:

helm upgrade arc \

--namespace "${NAMESPACE}" \

oci://ghcr.io/actions/actions-runner-controller-charts/gha-runner-scale-set-controller \

--values runner-set-values.yaml --installtolerations:

- key: "CriticalAddonsOnly"

operator: "Equal"

value: "true"

effect: "NoSchedule"This configuration applies tolerations to the controller, enabling it to run on nodes with the CriticalAddonsOnly taint i.e. default-ng nodegroup, ensuring it doesn't interfere with other runner workloads.

Next, we set up the Runner Scale Set using another Helm command:

helm upgrade warp-praj-arc-test oci://ghcr.io/actions/actions-runner-controller-charts/gha-runner-scale-set --namespace ${NAMESPACE} --values values.yaml --installThe key points for our Runner Scale Set configuration:

- GitHub App Integration: We connected our runners to GitHub via a GitHub App, enabling the runners to operate at the organization level.

- Listener Tolerations: Like the controller, the listener template also included tolerations to allow it to run on the

default-ngnode group. - Custom Image for Runners: We used a custom Docker image for the runner pods (detailed in the next section).

- Resource Requirements: To simulate high-performance runners, the runner pods were configured to require 8 CPU cores and 32 GiB of RAM, which aligns with the performance of an 8x runner used in the workflows.

githubConfigUrl: "https://github.com/Warpbuilds"

githubConfigSecret:

github_app_id: "<APP_ID>"

github_app_installation_id: "<APP_INSTALLATION_ID>"

github_app_private_key: |

-----BEGIN RSA PRIVATE KEY-----

[your-private-key-contents]

-----END RSA PRIVATE KEY-----

github_token: ""

listenerTemplate:

spec:

containers:

- name: listener

securityContext:

runAsUser: 1000

tolerations:

- key: "CriticalAddonsOnly"

operator: "Equal"

value: "true"

effect: "NoSchedule"

template:

spec:

containers:

- name: runner

image: <public_ecr_image_url>

command: ["/home/runner/run.sh"]

resources:

requests:

cpu: "4"

memory: "16Gi"

limits:

cpu: "8"

memory: "32Gi"

controllerServiceAccount:

namespace: arc-systems

name: arc-gha-rs-controllerCustom Image for Runner Pods

By default, the Runner Scale Sets use GitHub's official actions-runner image. However, this image doesn't include essential utilities such as wget, curl, and git, which are required by various workflows.

To address this, we created a custom Docker image based on GitHub's runner image, adding the necessary tools. This image was hosted in a public ECR repository and was used by the runner pods during our tests. The custom image allowed us to run workflows without missing dependencies and ensured smooth execution.

FROM ghcr.io/actions/actions-runner:2.319.1

RUN sudo apt-get update && sudo apt-get install -y wget curl unzip git

RUN sudo apt-get clean && sudo rm -rf /var/lib/apt/lists/*This approach ensures that our runners were always equipped with the required utilities, preventing errors and reducing friction during the workflow runs.

Tagging Infrastructure for Cost Tracking

In order to track costs effectively during the ARC setup, the infra resources created with this process are tagged, along with collecting hourly data. AWS Cost Explorer allows us to monitor and attribute costs to specific resources based on these tags. This was essential for calculating the true cost of running ARC, with all costs like EC2, EBS, VPC, S3, NAT Gateway, data ingress/egress etc. included.

Running workflows

We use PostHog OSS as an example repo to demonstrate the cost comparison on real world use cases over 960 jobs. The duty cycle is a representative 2 hour period, where there is a continuous load of commits, each triggering a job every few minutes.

PostHog's Frontend CI Workflow

To simulate real-world use-case, we leveraged PostHog's Frontend CI workflow. This workflow is designed to run a series of frontend checks, followed by two sets of jobs: one for code quality checks and another for executing a matrix of Jest tests.

You can view the workflow file here: PostHog Frontend CI Workflow

Auto-Commit Simulation Script

To ensure continuous triggering of the Frontend CI workflow, we developed an automated commit script in JavaScript. This script generates commits every minute on the forked PostHog repository, which in turn triggers the CI workflow.

The script is designed to run for two hours, ensuring a consistent workload over an extended period for accurate cost measurement. The results were then analyzed to compare the costs of using ARC versus WarpBuild's BYOC runners.

Commit simulation script:

const { exec } = require("child_process");

const fs = require("fs");

const path = require("path");

const repoPath = "arc-setup/posthog";

const frontendDir = path.join(repoPath, "frontend");

const intervalTime = 1 * 60 * 1000; // Every Minute

const maxRunTime = 2 * 60 * 60 * 1000; // 2 hours

const setupGitConfig = () => {

exec('git config user.name "Auto Commit Script"', { cwd: repoPath });

exec('git config user.email "[email protected]"', { cwd: repoPath });

};

const makeCommit = () => {

const logFilePath = path.join(frontendDir, "commit_log.txt");

// Create the frontend directory if it doesn't exist

if (!fs.existsSync(frontendDir)) {

fs.mkdirSync(frontendDir);

}

// Write to commit_log.txt in the frontend directory

fs.appendFileSync(

logFilePath,

`Auto commit in frontend at ${new Date().toISOString()}\n`,

);

// Add, commit, and push changes

exec(`git add ${logFilePath}`, { cwd: repoPath }, (err) => {

if (err) return console.error("Error adding file:", err);

exec(

`git commit -m "Auto commit at ${new Date().toISOString()}"`,

{ cwd: repoPath },

(err) => {

if (err) return console.error("Error committing changes:", err);

exec("git push origin master", { cwd: repoPath }, (err) => {

if (err) return console.error("Error pushing changes:", err);

console.log("Changes pushed successfully");

});

},

);

});

};

setupGitConfig();

const interval = setInterval(makeCommit, intervalTime);

// Stop the script after 2 hours

setTimeout(() => {

clearInterval(interval);

console.log("Script completed after 2 hours");

}, maxRunTime);Results

Performance and Scalability

The following metrics showcase the average time taken by ARC Runners for jobs in the Frontend-CI workflow. All the jobs are run on the same underlying CPU family (m7a) and request the same amount of resources (vcpu and memory).

| Test | ARC (Varied Node Sizes) | ARC (1 Job Per Node) |

|---|---|---|

| Code Quality Checks | ~9 minutes 30 seconds | ~7 minutes |

| Jest Test (FOSS) | ~2 minutes 10 seconds | ~1 minute 30 seconds |

| Jest Test (EE) | ~1 minute 35 seconds | ~1 minute 25 seconds |

ARC runners with varied node sizes exhibited slower performance primarily because multiple runners shared disk and network resources on the same node, causing bottlenecks despite larger node sizes.

To address these bottlenecks, we tested a 1 Job Per Node configuration with ARC, where each job ran on its own node. This approach significantly improved performance. However, it introduced higher job start delays due to the time required to provision new nodes.

Note: Job start delays are directly influenced by the time needed to provision a new node and pull the container image. Larger image sizes increase pull times, leading to longer delays. If the image size is reduced, additional tools would need to be installed during the action run, increasing the overall workflow run time.

Node spin up and image pull takes ~45s to 1.5m for

arcrunners. This is a significant overhead for workflows that run multiple jobs. Using

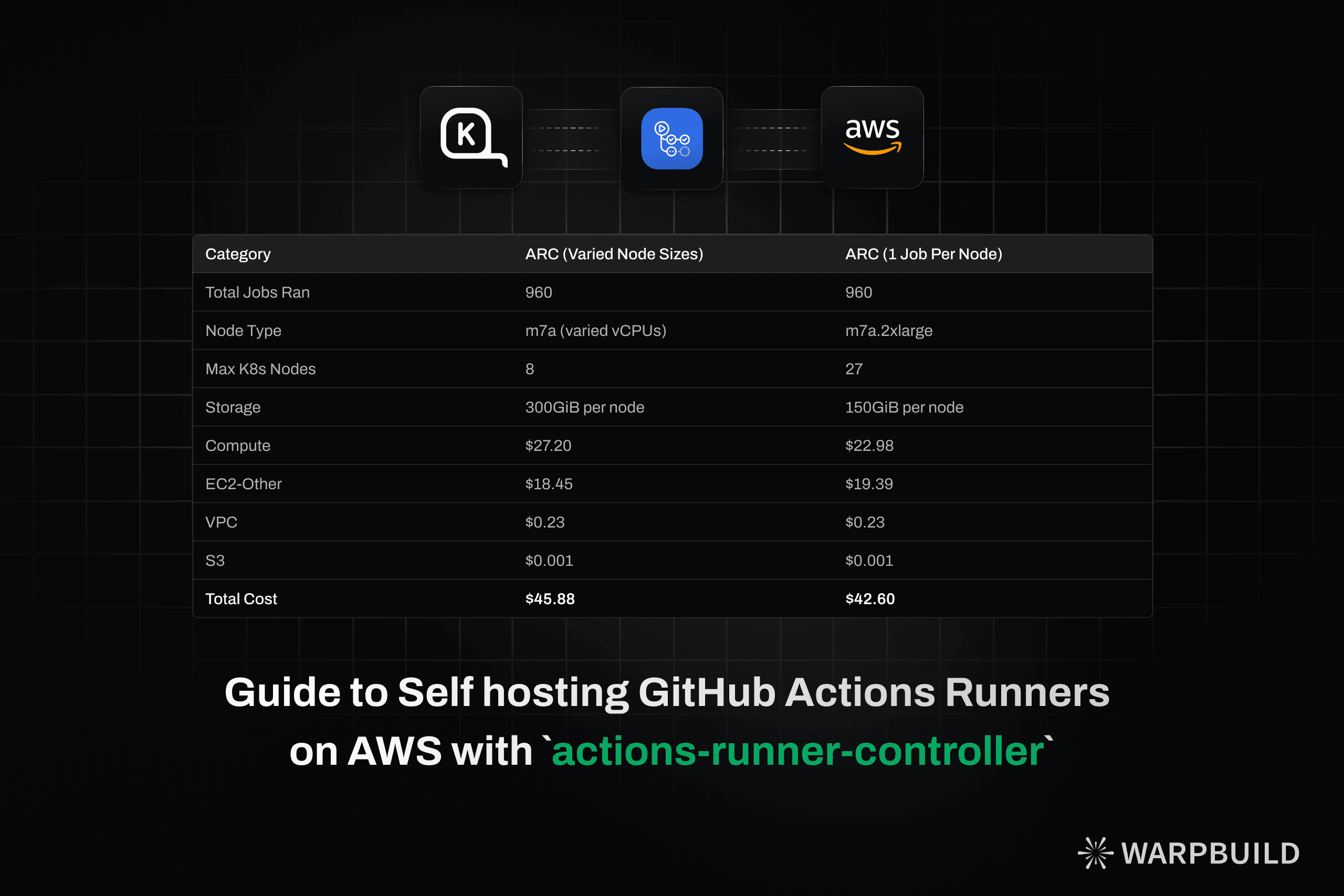

Cost Comparison

| Category | ARC (Varied Node Sizes) | ARC (1 Job Per Node) |

|---|---|---|

| Total Jobs Ran | 960 | 960 |

| Node Type | m7a (varied vCPUs) | m7a.2xlarge |

| Max K8s Nodes | 8 | 27 |

| Storage | 300GiB per node | 150GiB per node |

| IOPS | 5000 per node | 5000 per node |

| Throughput | 500Mbps per node | 500Mbps per node |

| Compute | $27.20 | $22.98 |

| EC2-Other | $18.45 | $19.39 |

| VPC | $0.23 | $0.23 |

| S3 | $0.001 | $0.001 |

| Total Cost | $45.88 | $42.60 |

The cost comparison shows that ARC with 1 job per node is more cost effective than ARC with varied node sizes. This is also the more performant setup.

Conclusion

ARC provides a flexible and scalable solution for running GitHub Actions workflows. It is important to configure it correctly to avoid performance bottlenecks and optimize costs.

However, it comes with operational overhead (kubernetes cluster management, terraform, etc.) and continuous maintenance for maintenance at scale and keeping the runner binaries updated.

Despite these challenges, ARC is a powerful tool for running GitHub Actions workflows at scale being 10x cheaper than the default Github Actions runners.

Tip

WarpBuild provides the same flexibility as actions-runner-controller but with none of the operational complexity. WarpBuild runners are also more cost effective than ARC runners, with a ~41% cost saving.

Get started with WarpBuild in ~3 minutes for faster job start times, caching backed by object storage, and easy to use dashboards. Book a call or get started today!

Last updated on